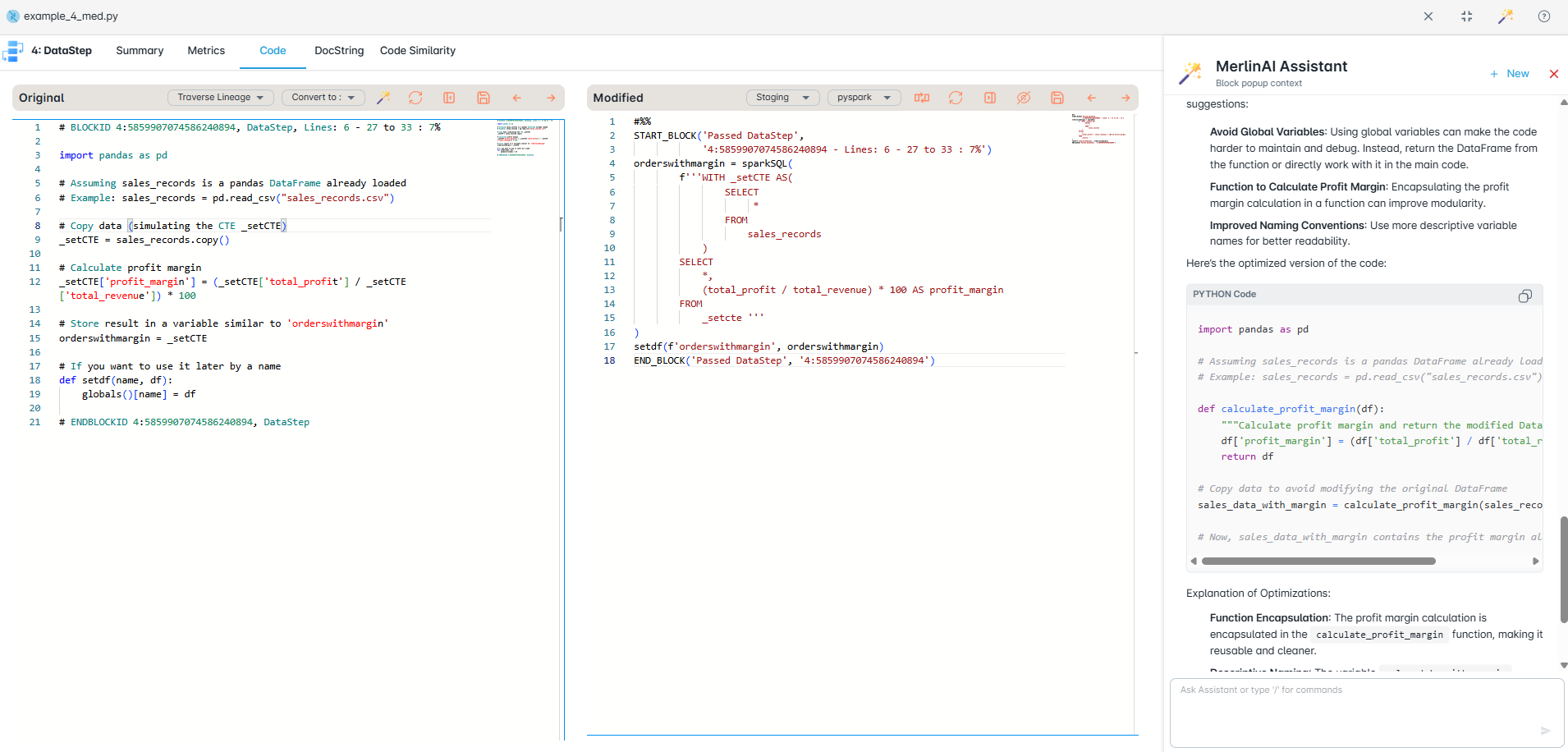

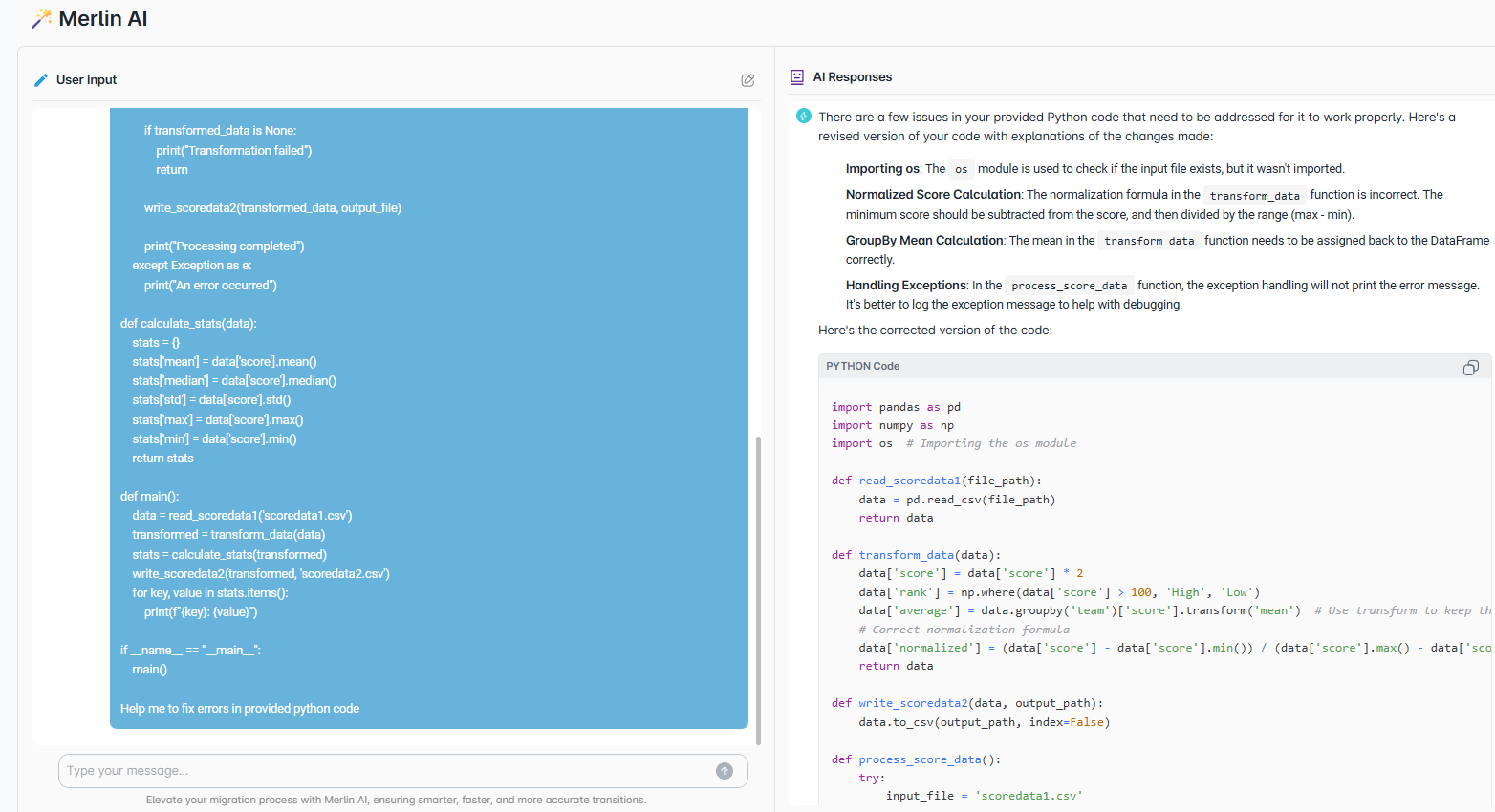

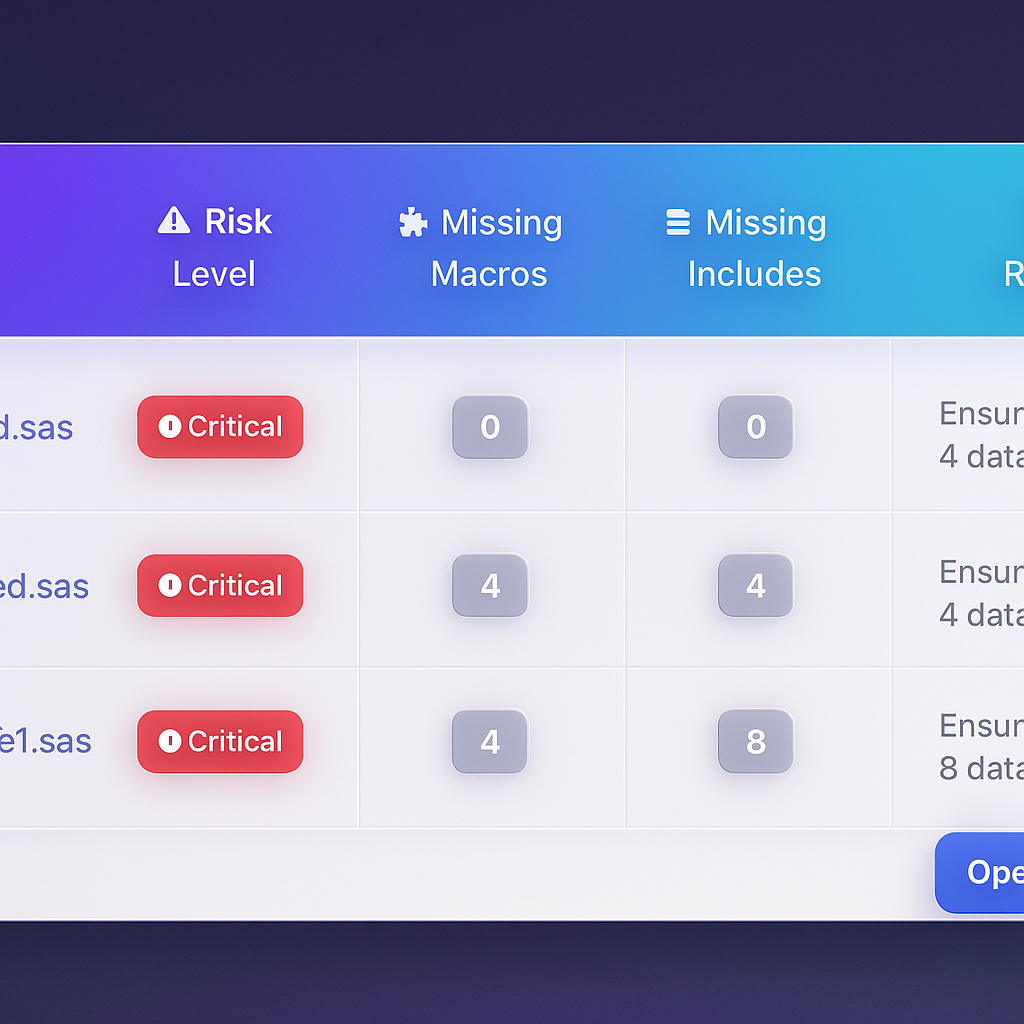

- Automatic code assessment for rationalization and migration planning

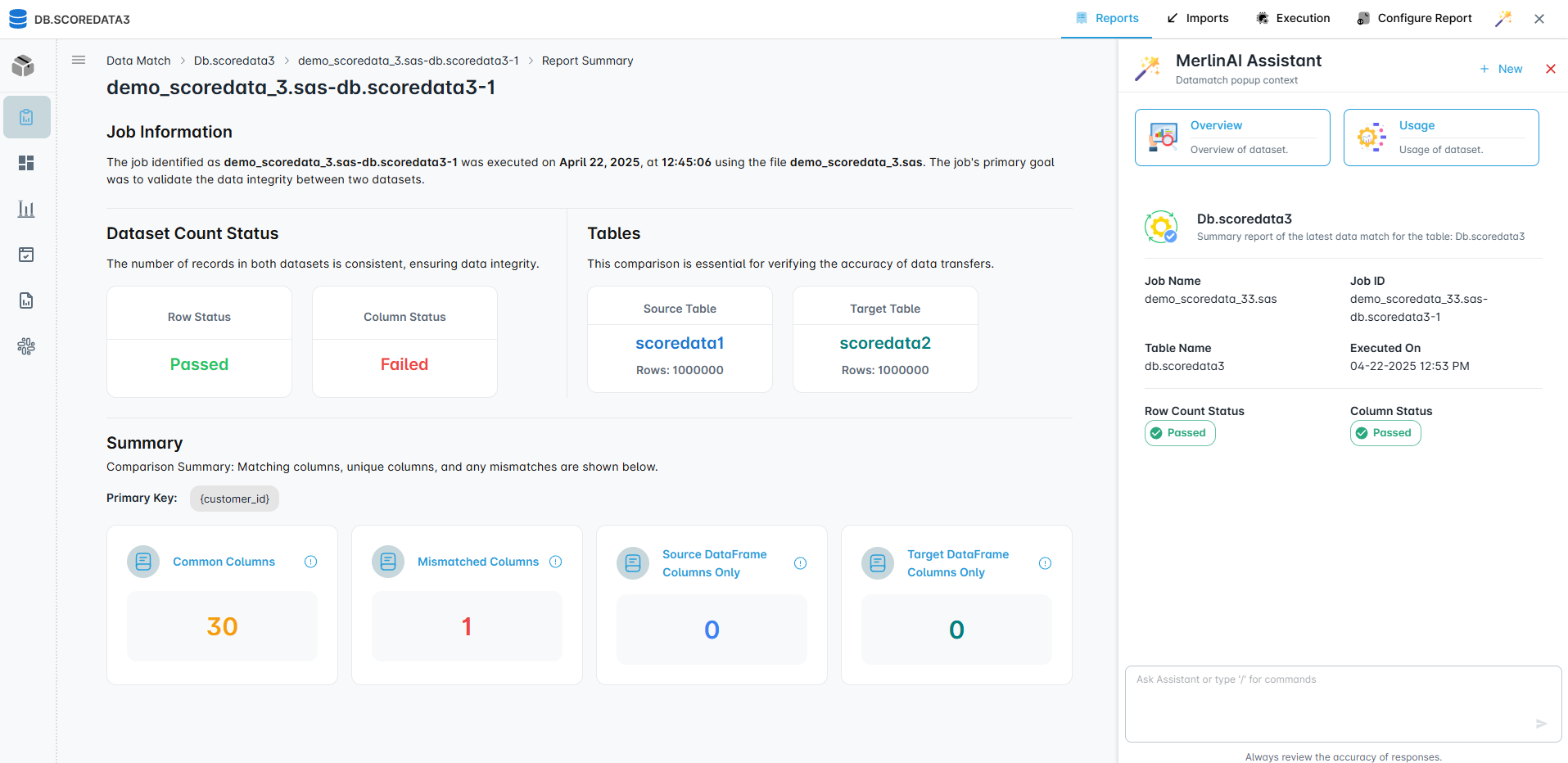

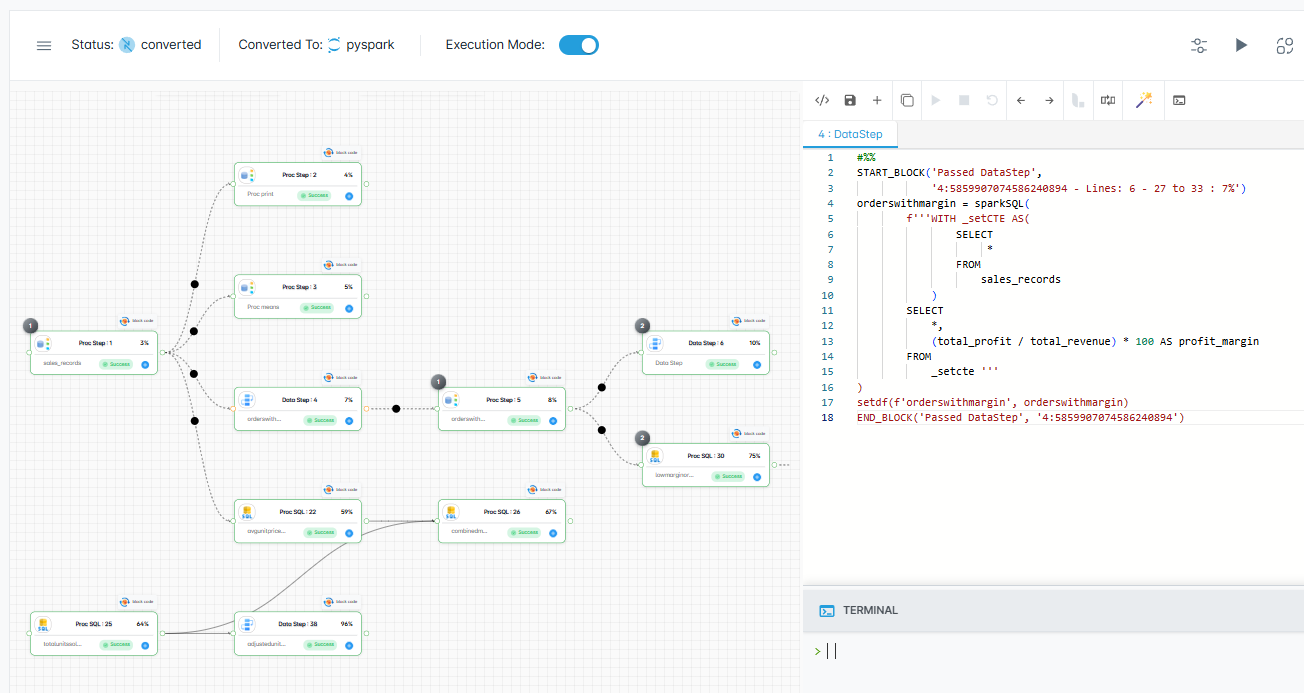

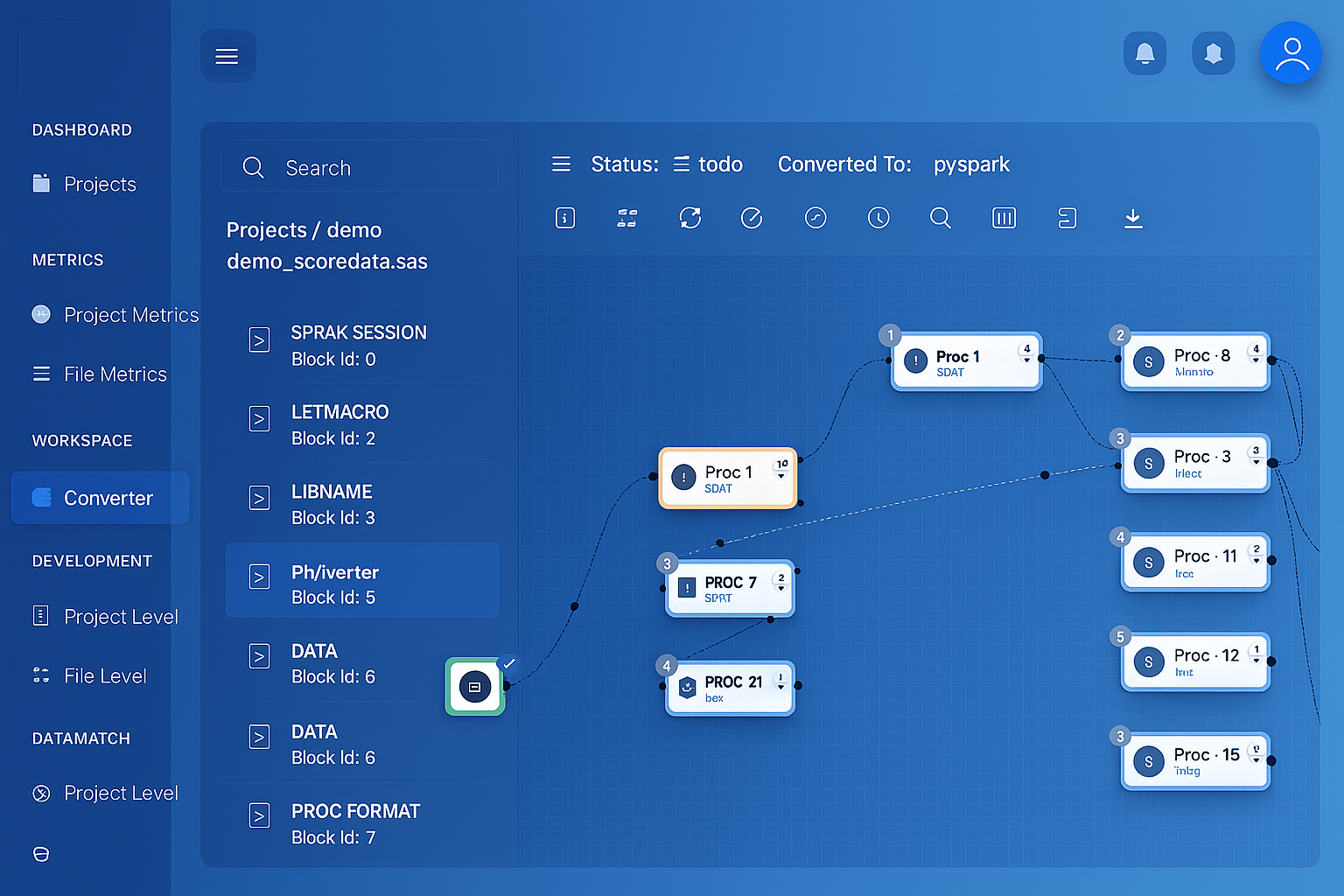

- Comprehensive dependency mapping with data and file lineage

- Development of required frameworks and standards

- Code complexity analysis, block labels, and LoC assessment

- Rationalize and standardize current ETL